The colored paths are labeled training data, just like in my last post on this.

The title gives the "answer" for a test video:

Could you tell from just this what the sequence of gestures was?

Not perfectly, but way better than chance.

See a couple more examples by clicking:

I'm sure that a prediction method based only on these principal components isn't the best way to go (is it ever? except for reducing the size of your problem for computational purposes), but I'd like to try -- then at least I can visualize my algorithm very nicely and see where it's failing.

And a few more (first ten from "devel01" test data) here (pdf, not an animation).

Monday, December 19, 2011

Sunday, December 18, 2011

speaking of machine learning

Umm, not exactly:

And "Albanian" doesn't come first ("Africaans") does, so it's not even that kind of bug.

Saturday, December 17, 2011

Defining Churn Rate--is instantaneous better?

I enjoyed this post on defining "churn rate" at the Shopify tech blog, though I don't remember at all how it ended up in my reading list.

The author goes through ways you might define define "churn rate", showing that each of them could be misleading.

Like a good ex-calculus teacher (from one year in grad school), I wondered: Isn't any "rate" more naturally understood as an instantaneous quantity? Doesn't the problem come from the fact that he's trying to understand churn over an interval first?

To illustrate, here's my oversimplified model:

The author goes through ways you might define define "churn rate", showing that each of them could be misleading.

Like a good ex-calculus teacher (from one year in grad school), I wondered: Isn't any "rate" more naturally understood as an instantaneous quantity? Doesn't the problem come from the fact that he's trying to understand churn over an interval first?

To illustrate, here's my oversimplified model:

- You start with some number of customers (initial_customers) at time t=1.

- Each day you gain new customers at some number of new customers, drawn from a Poisson distribution with expected value expected new customer rate.

- Each day you lose some proportion of your customers, drawn from a binomial distribution where the expected value of the proportion you lose is your daily churn rate.

I suspect that everything I say will apply also to more complicated/realistic models.

Here's a silly simulation (here's code for the simulation) of this model, where I've determined the expected churn rate (flat, then slopes up) and expected new customer rate (flat).

Churn rates:

Daily number of customers:

Assuming your number of customers doesn't change too much in a day, the daily churn rate is almost like an instantaneous rate.

Here's a silly simulation (here's code for the simulation) of this model, where I've determined the expected churn rate (flat, then slopes up) and expected new customer rate (flat).

Churn rates:

Daily number of customers:

Assuming your number of customers doesn't change too much in a day, the daily churn rate is almost like an instantaneous rate.

So, I have two notions of daily churn rate:

The definition he ended up with is an appropriate weighted average of daily churn rate and he must have been thinking of this.

Why take an average over a period? Because actual churn rates are noisy, so averaging is one way to smooth out that noise.

But once we're thinking in these terms, aren't there all sorts of standard time series methods for helping us model (and even project) churn rates?

In other words: Averaging isn't the only way to separate the "noise (randomness in churn) from the "signal" (expected value of churn).

I'd love to hear about anything I'm missing here. I've never thought about this before, so forgive me if I'm very confused.

Why take an average over a period? Because actual churn rates are noisy, so averaging is one way to smooth out that noise.

But once we're thinking in these terms, aren't there all sorts of standard time series methods for helping us model (and even project) churn rates?

In other words: Averaging isn't the only way to separate the "noise (randomness in churn) from the "signal" (expected value of churn).

I'd love to hear about anything I'm missing here. I've never thought about this before, so forgive me if I'm very confused.

Sunday, December 11, 2011

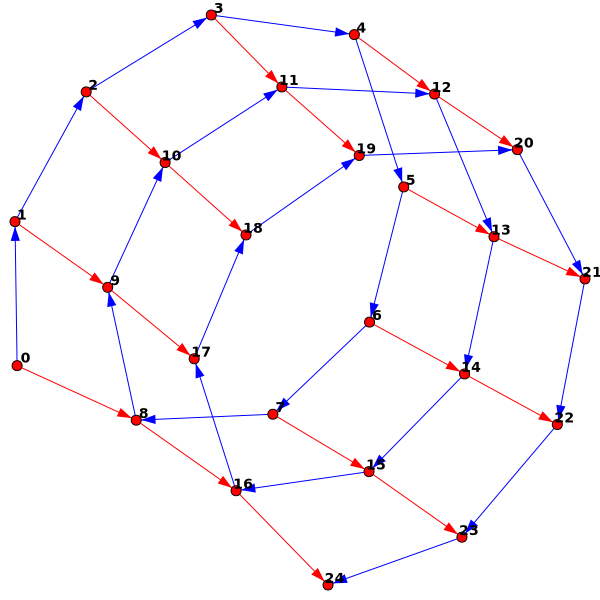

Visualizing Gestures as Paths

Kaggle is hosting an exciting new competition in which the object is to learn to identify sequences of gestures from just one example of each gesture. I would bet this competition has a lot of potential to attract academics interested in machine learning.

The competition comes with sample code for importing the datasets (AVI videos) to MATLAB, but right now I don't have MATLAB (although a recent post from one of my favorite bloggers reminded me to obtain it even before this annoyance).

The other tools I've used for data analysis are R and Octave (a free program similar to MATLAB). The best option I found for importing the data was Octave's 'video' package (see below the fold for installation tips). Please let me know if you find other possibilities!

The data come in batches, so I imported the first batch of data, saved it, and loaded it in R. When imported the data, I also shrunk each image, for two reasons:

Then treating each frame (image) as one "row" of data, plotted the first two principal components (of the training data only) as a 'quick and dirty' way to visualize the data. In this plot, the points represent frames, and the colors encode which video each frame came from:

Observations:

All of my code for this is available on Github.

The competition comes with sample code for importing the datasets (AVI videos) to MATLAB, but right now I don't have MATLAB (although a recent post from one of my favorite bloggers reminded me to obtain it even before this annoyance).

The other tools I've used for data analysis are R and Octave (a free program similar to MATLAB). The best option I found for importing the data was Octave's 'video' package (see below the fold for installation tips). Please let me know if you find other possibilities!

The data come in batches, so I imported the first batch of data, saved it, and loaded it in R. When imported the data, I also shrunk each image, for two reasons:

- Smaller dataset is easier to deal with (shrinking each dimension by a factor of 3 shrinks the final dataset by a factor of 9).

- I also hoped that blurring the fine distinctions of a larger image might cause each video to trace out more of a continuous path.

Then treating each frame (image) as one "row" of data, plotted the first two principal components (of the training data only) as a 'quick and dirty' way to visualize the data. In this plot, the points represent frames, and the colors encode which video each frame came from:

Observations:

- I was surprised the paths don't trace out paths that are more continuous.

- Although they do trace out somewhat continuous paths

- Each gesture/video traces out a somewhat distinctive path

- Most gestures/videos begin and in roughly the same region (this makes sense -- each video seems to begin and end with the person in roughly the same position).

- Probably it would make more sense to include the "test" data in my PCA.

- The real question is how much the appropriate segment of each path in the "test" data resemble the corresponding path in the training data.

- I want to visualize the data (and do my learning) with an embedding/transformation that makes more sense than PCA. Presumably there is some structure in the set of all images, and a method like Laplacian Eigenmaps or ISOMAP will presumably do a better job taking advantage of that.

All of my code for this is available on Github.

Saturday, November 26, 2011

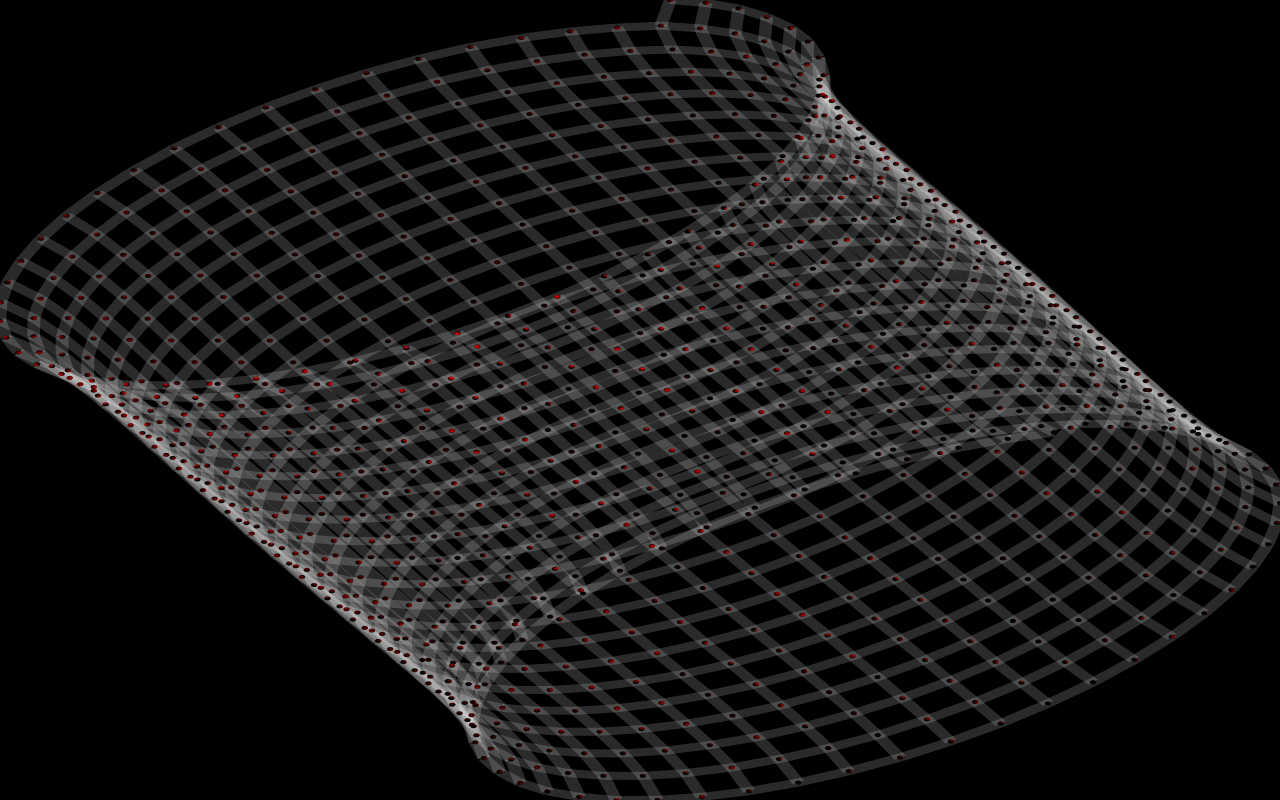

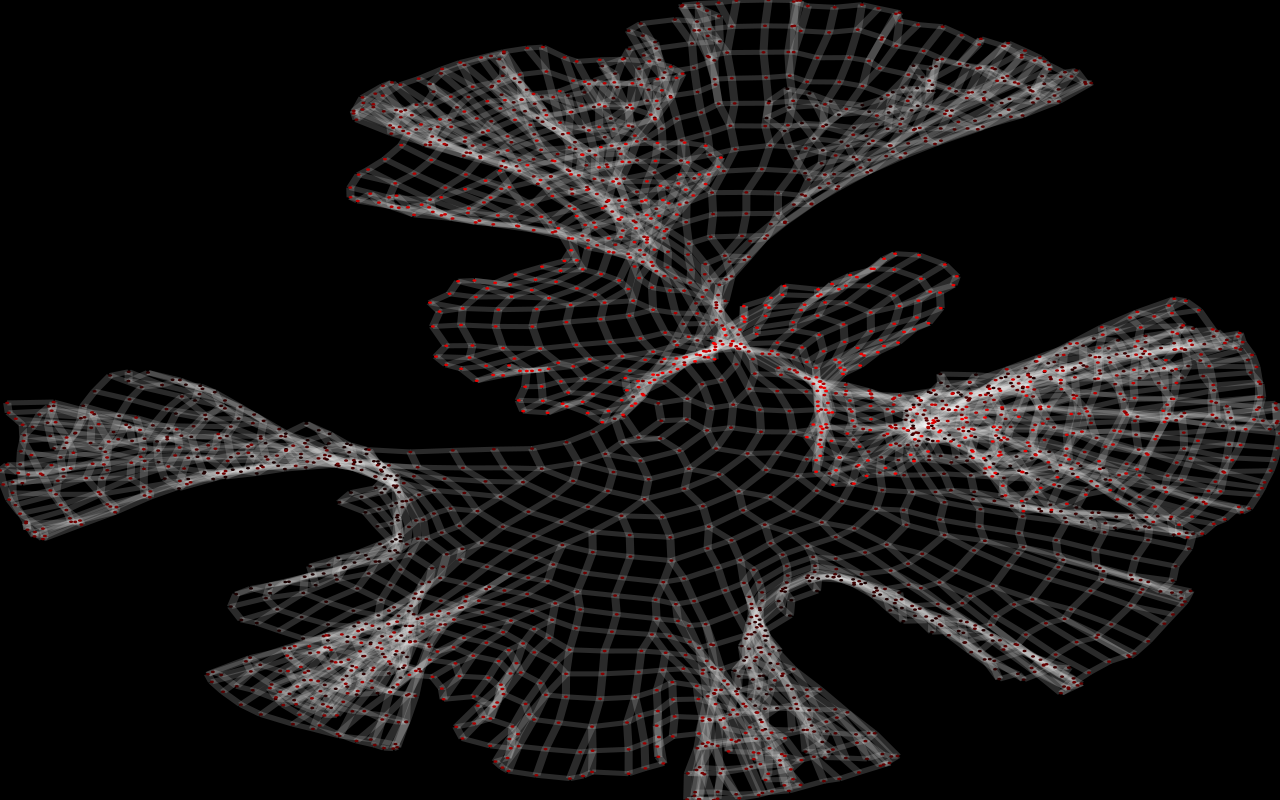

Simulated Knitting

Alternate title: I'm a big fan of the Fruchterman & Reingold graph embedding algorithm.

Recently I created a site for trying to predict the pattern of colors in pooled knitting.

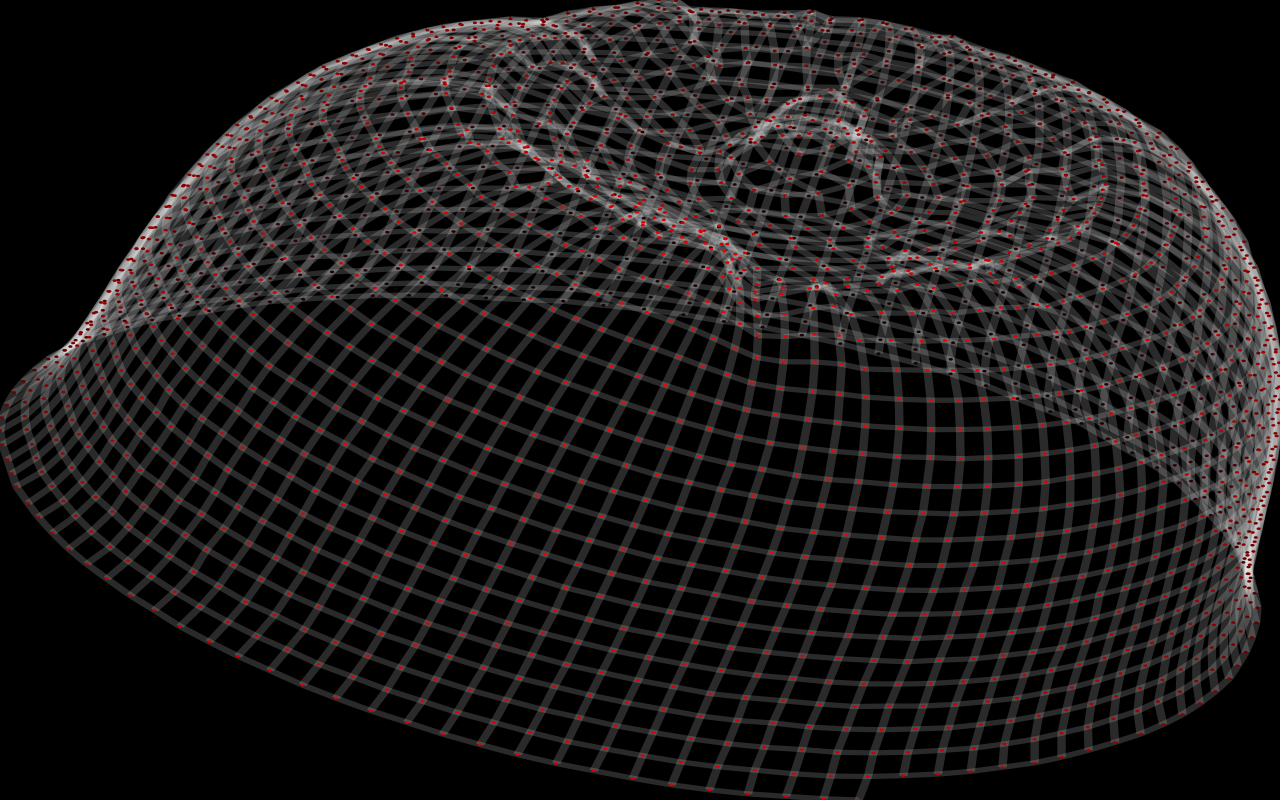

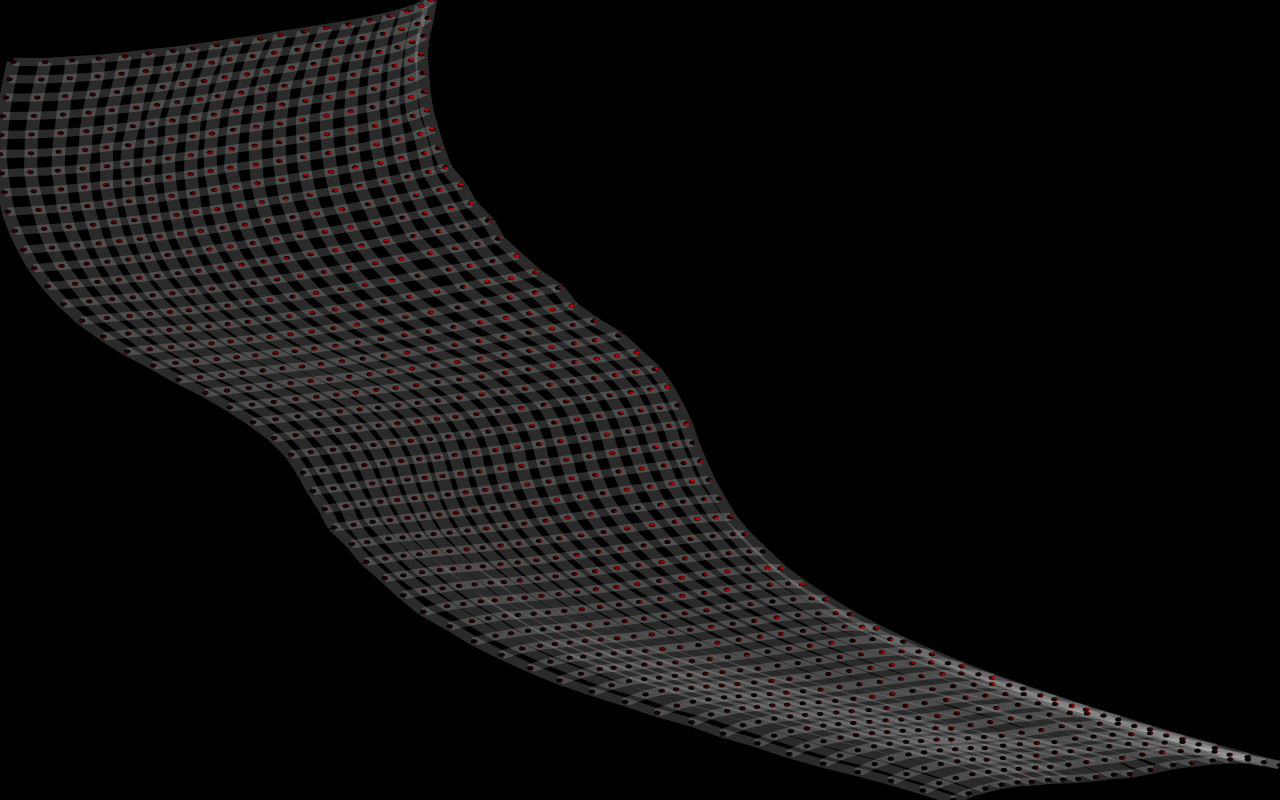

Next I wanted to be able to predict the shape of a knitted object. I'll explain more in a minute, but first here are some examples (click on the images for a gif movie):

A hat:

Some flat knitting (doesn't look flat to you? I'll explain in a minute):

So, what's going on here? I've done two things:

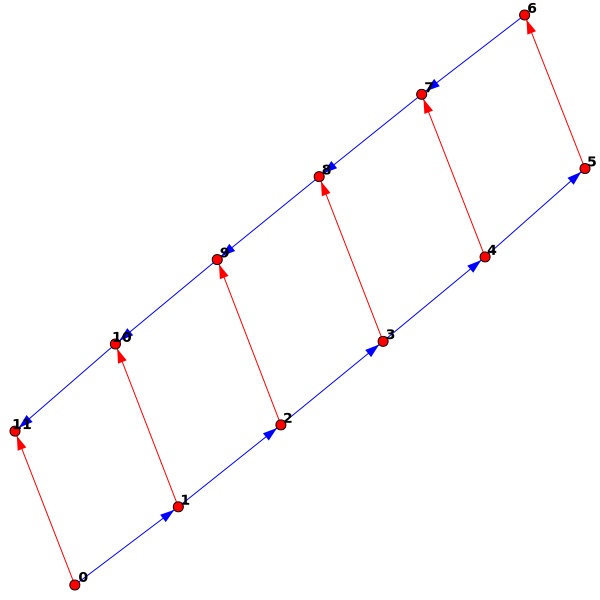

So, I just have to construct graphs that correspond to things you might knit. To illustrate the idea, here's a very simple example:

And the code (after I wrote the appropriate methods) to generate it:

This creates the graph, adds five stitches, starts a new row, and then finishes that row. The "flat knitting" above was created just like this: "cast on" 50 stitches, and then knit 20 rows back and forth. Why didn't it lie flat, though? The real answer: Forcing it to lie flat isn't what would make sense! The real knitting (just like a real "flat") piece of paper has the option to bend in one direction at a time. The algorithm has a certain distance apart that it wants each pair of linked vertices to be, and the object doesn't have to lie flat to achieve that.

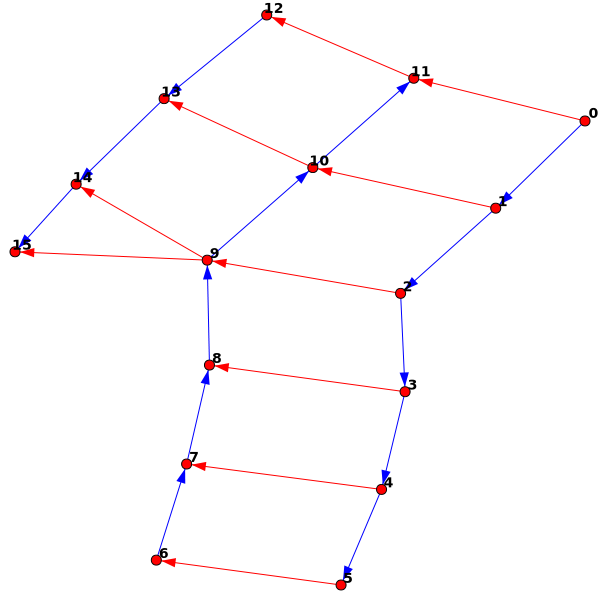

Extending this a little, I can add an "increase" (two stitches connected to the same stitch in the previous row):

We can also knit "in the round" by knitting a row and then connecting the current stitch to the first stitch:

Here's an example of the same thing (but larger -- 20 circular rows of 50 stitches each) embedded in 3d:

As a final example, let's try to make something with constant negative curvature (a pseudo-sphere). Geeky knitters often do this for fun by knitting in a circle (as above) and increasing the number of stitches at a constant rate:

The way I like to think about this mathematically, negative curvature for a surface means that small discs of a given radius have more volume than on a flat plane (and the opposite for positive curvature). Think of squashing a saddle flat and having extra material, vs. squashing a hemisphere flat and not having enough.

And here's mine:

Here's my code on GitHub.

(Including a modified version of this code for plotting in 3D. Thanks Michael!)

[Edit: Using Ubuntu 10.04.3 LTS & Python 2.6.5, I installed python-iGraph with:

sudo aptitude install python-igraph

Apparently that's not working in Ubuntu 11.10. See this G+ thread for more. Thanks, Shae.]

Recently I created a site for trying to predict the pattern of colors in pooled knitting.

Next I wanted to be able to predict the shape of a knitted object. I'll explain more in a minute, but first here are some examples (click on the images for a gif movie):

A hat:

Some flat knitting (doesn't look flat to you? I'll explain in a minute):

So, what's going on here? I've done two things:

- Construct an abstract graph corresponding to something someone might knit

- Embed that graph in 3D (determine where each of the points of the graph should go)

- Attractive: Applies to each pair of vertices connected by an edge, tries to pull them close to each other like a spring.

- Repulsive: Applies to each pair of vertices (regardless of whether they are connected by an edge), pushes them apart. Primarily a close-range force, infinitely strong in the limit as the points get very close together.

So, I just have to construct graphs that correspond to things you might knit. To illustrate the idea, here's a very simple example:

And the code (after I wrote the appropriate methods) to generate it:

g = KnittedGraph() #Create new "KnittedGraph" object g.AddStitches(5) #Add five stitches g.NewRow() #Create a new row g.FinishRow() #Finish the new row

This creates the graph, adds five stitches, starts a new row, and then finishes that row. The "flat knitting" above was created just like this: "cast on" 50 stitches, and then knit 20 rows back and forth. Why didn't it lie flat, though? The real answer: Forcing it to lie flat isn't what would make sense! The real knitting (just like a real "flat") piece of paper has the option to bend in one direction at a time. The algorithm has a certain distance apart that it wants each pair of linked vertices to be, and the object doesn't have to lie flat to achieve that.

Extending this a little, I can add an "increase" (two stitches connected to the same stitch in the previous row):

g = KnittedGraph() #Create new "KnittedGraph" object g.AddStitches(5) #Add five stitches g.NewRow() #Create a new row g.FinishRow() #Finish the new row g.NewRow() g.AddStitch() g.Increase() #Add an "increase" (one stitch connected to two in the previous row)

We can also knit "in the round" by knitting a row and then connecting the current stitch to the first stitch:

g = KnittedGraph() g.AddStitches(8) g.ConnectToZero() #Connect the current stitch to the original stitch g.AddStitches(16) #Knit two more rows (by adding 16 stitches)

Here's an example of the same thing (but larger -- 20 circular rows of 50 stitches each) embedded in 3d:

As a final example, let's try to make something with constant negative curvature (a pseudo-sphere). Geeky knitters often do this for fun by knitting in a circle (as above) and increasing the number of stitches at a constant rate:

The way I like to think about this mathematically, negative curvature for a surface means that small discs of a given radius have more volume than on a flat plane (and the opposite for positive curvature). Think of squashing a saddle flat and having extra material, vs. squashing a hemisphere flat and not having enough.

And here's mine:

Here's my code on GitHub.

(Including a modified version of this code for plotting in 3D. Thanks Michael!)

[Edit: Using Ubuntu 10.04.3 LTS & Python 2.6.5, I installed python-iGraph with:

sudo aptitude install python-igraph

Apparently that's not working in Ubuntu 11.10. See this G+ thread for more. Thanks, Shae.]

Monday, August 8, 2011

Avoiding responsibility with mitigated speech

[EDIT: Comments here and elsewhere have made it clear to me that I should have been a lot more careful in how I wrote this -- in particular, I think it would have been much better as a more personal narrative, about tendencies I have, at times, noticed in myself. Sometimes I've used this 'mitigated speech' in ways or for reasons that I think I should avoid. Certainly in many, many situations, 'mitigated speech' is very helpful to achieving the goals at hand. Sometime I want to take a more thorough look at indirectness and its uses. This should have been more about particular behaviors in a particular environment in a particular person (me) -- sorry!]

Our managers at work recently read Gladwell's Outliers (warning: I haven’t read it), in which he popularized the notion of mitigated speech (or ‘deferential’ speech), which he defines as "any attempt to downplay or sugarcoat the meaning of what is being said".

Here are six degrees of mitigation:

1. Command – “Strategy X is going to be implemented”

2. Team Obligation Statement – “We need to try strategy X”

3. Team Suggestion – “Why don’t we try strategy X?”

4. Query – “Do you think strategy X would help us in this situation?”

5. Preference – “Perhaps we should take a look at one of these Y alternatives”

6. Hint – “I wonder if we could run into any roadblocks on our current course”

These were each role-played for us with an airplane pilot and copilot, where the copilot had information essential to not crashing the plane. (Apparently, mitigated speech actually causes plane crashes.) In some of the examples (“Do you think we should turn around [instead of crashing into...]?”), mitigated speech prevented the copilot’s message from getting through. I think the lesson we were supposed to take away was pretty simple (too simple!):

1. Sometimes people use mitigated speech, especially when there is a difference of power/position in the hierarchy.

2. Mitigated speech is a form of not saying what you mean.

3. It may be appropriate to temper/mitigate your speech, depending on

your position in the hierarchy. (This is interesting -- not explored very far then, and I won't explore it further myself here.) HOWEVER::

4. Always be aware of what message your trying to convey.

Like I said, too simple -- I don’t think this message will work, because I think it ignores the real reason people talk this way: mitigated speech is a form of cowardice. I’m generalizing here mostly from observing myself, but I’ve seen it enough in others that I think it’s pretty common. It’s what we do when we’re afraid to take responsibility for what we have to say. Sometimes I’ve been working on a project where I thought something should go differently, and I said “Shouldn’t we maybe do ____ because of ____?” Then we did what I thought we shouldn’t do, and it sometimes turned out I was right, and then I thought (wrongly) to myself: “I called that one! I told them it wouldn’t work!”

Of course, I hadn’t actually told them it wouldn’t work. I hadn’t actually said anything. One of the things I’ve been learning is to, in the words of a recent episode of the Lifestyle Business Podcast (focused on advice/encouragement for people starting their own businesses, but it’s useful for those of us inside big companies too, especially those with an intrapreneurial innovation style):

You should “step up and say this is how I think things should go, and this is the

plan”. Give me "something to react to". It helps to be "taking a stand ... so at least you have a way forward".

I really like this idea that taking a stand is the way forward. It’s frustrating when everyone says “it could be this...” or “maybe that...”. Someone should just decide what they think! Propose a plan! That forces the rest of to decide what we think (agree, disagree, whatever), and then we can make some progress. Importantly, this isn’t just a different rhetorical style. It’s actually hard work to decide what you think, and then propose and defend it. But that’s exactly the idea -- forcing ourselves to do that hard work.

And it’s okay to be wrong sometimes. Fear of being wrong is another force (besides laziness) driving us toward “mitigated speech.” So we have to be able trust that we can maintain our credibility in the face of being wrong -- that means trusting in ourselves to deserve the credibility (and of course, having the skills/knowledge to actually deserve it), but also trusting in others not to loose faith.

Learning to trust my colleagues is something else that I believe has helped take a stand more often. I’m lucky I have great people to work with.

In summary: We often use this ‘deferential’ speech, especially with people in positions of power. But those people are busy! They don’t always want you deferring to them for every decision. Of course, someone more powerful than you will often have the final say to decide otherwise. But even that can be made easier for them if you first decide what you think, and then tell them.

Finally, here’s another tip from Ian and Dan in the same episode of the Lifestyle Business Podcast. I think this can also apply in the corporate world as in their entrepreneurial world:

"#5 never ask anything more complicated than a 'yes'"

"If you're asking for resources, or money, or time ... get it all set

up so all you need is that person to say YES. That demonstrates that

you're competent, that you care, and that you're willing to take

responsibility. ... [as a simple example:] -- the

smallest deal possible is getting a cup of coffee with somebody. 'How

do you feel about grabbing a cup of coffee sometime?' It's bad enough

that you want me to figure out how we're going to be doing this, when

we're going to be doing this, or even if I want to be doing this. But

you want to know about my feelings, too? I've gotta write a

5-paragraph essay to this person. Give me "I'm gonna be at the

Starbucks at 8pm Wednesday night. Can you swing by? Just make it easy

on me."

Our managers at work recently read Gladwell's Outliers (warning: I haven’t read it), in which he popularized the notion of mitigated speech (or ‘deferential’ speech), which he defines as "any attempt to downplay or sugarcoat the meaning of what is being said".

Here are six degrees of mitigation:

1. Command – “Strategy X is going to be implemented”

2. Team Obligation Statement – “We need to try strategy X”

3. Team Suggestion – “Why don’t we try strategy X?”

4. Query – “Do you think strategy X would help us in this situation?”

5. Preference – “Perhaps we should take a look at one of these Y alternatives”

6. Hint – “I wonder if we could run into any roadblocks on our current course”

These were each role-played for us with an airplane pilot and copilot, where the copilot had information essential to not crashing the plane. (Apparently, mitigated speech actually causes plane crashes.) In some of the examples (“Do you think we should turn around [instead of crashing into...]?”), mitigated speech prevented the copilot’s message from getting through. I think the lesson we were supposed to take away was pretty simple (too simple!):

1. Sometimes people use mitigated speech, especially when there is a difference of power/position in the hierarchy.

2. Mitigated speech is a form of not saying what you mean.

3. It may be appropriate to temper/mitigate your speech, depending on

your position in the hierarchy. (This is interesting -- not explored very far then, and I won't explore it further myself here.) HOWEVER::

4. Always be aware of what message your trying to convey.

Like I said, too simple -- I don’t think this message will work, because I think it ignores the real reason people talk this way: mitigated speech is a form of cowardice. I’m generalizing here mostly from observing myself, but I’ve seen it enough in others that I think it’s pretty common. It’s what we do when we’re afraid to take responsibility for what we have to say. Sometimes I’ve been working on a project where I thought something should go differently, and I said “Shouldn’t we maybe do ____ because of ____?” Then we did what I thought we shouldn’t do, and it sometimes turned out I was right, and then I thought (wrongly) to myself: “I called that one! I told them it wouldn’t work!”

Of course, I hadn’t actually told them it wouldn’t work. I hadn’t actually said anything. One of the things I’ve been learning is to, in the words of a recent episode of the Lifestyle Business Podcast (focused on advice/encouragement for people starting their own businesses, but it’s useful for those of us inside big companies too, especially those with an intrapreneurial innovation style):

You should “step up and say this is how I think things should go, and this is the

plan”. Give me "something to react to". It helps to be "taking a stand ... so at least you have a way forward".

I really like this idea that taking a stand is the way forward. It’s frustrating when everyone says “it could be this...” or “maybe that...”. Someone should just decide what they think! Propose a plan! That forces the rest of to decide what we think (agree, disagree, whatever), and then we can make some progress. Importantly, this isn’t just a different rhetorical style. It’s actually hard work to decide what you think, and then propose and defend it. But that’s exactly the idea -- forcing ourselves to do that hard work.

And it’s okay to be wrong sometimes. Fear of being wrong is another force (besides laziness) driving us toward “mitigated speech.” So we have to be able trust that we can maintain our credibility in the face of being wrong -- that means trusting in ourselves to deserve the credibility (and of course, having the skills/knowledge to actually deserve it), but also trusting in others not to loose faith.

Learning to trust my colleagues is something else that I believe has helped take a stand more often. I’m lucky I have great people to work with.

In summary: We often use this ‘deferential’ speech, especially with people in positions of power. But those people are busy! They don’t always want you deferring to them for every decision. Of course, someone more powerful than you will often have the final say to decide otherwise. But even that can be made easier for them if you first decide what you think, and then tell them.

Finally, here’s another tip from Ian and Dan in the same episode of the Lifestyle Business Podcast. I think this can also apply in the corporate world as in their entrepreneurial world:

"#5 never ask anything more complicated than a 'yes'"

"If you're asking for resources, or money, or time ... get it all set

up so all you need is that person to say YES. That demonstrates that

you're competent, that you care, and that you're willing to take

responsibility. ... [as a simple example:] -- the

smallest deal possible is getting a cup of coffee with somebody. 'How

do you feel about grabbing a cup of coffee sometime?' It's bad enough

that you want me to figure out how we're going to be doing this, when

we're going to be doing this, or even if I want to be doing this. But

you want to know about my feelings, too? I've gotta write a

5-paragraph essay to this person. Give me "I'm gonna be at the

Starbucks at 8pm Wednesday night. Can you swing by? Just make it easy

on me."

Sunday, April 24, 2011

my first python script

555 = ((5) - ((5) * ((5) * (5)))) + (((5) * ((5) + (5))) + (((5) * (5)) * ((5) * (5))))

At her blog, Sue VanHattum mentions this puzzle: Use five 5's to make 37.

That got me thinking about making things out of fives (or some other number), so I wrote a Python script (I'm trying to learn how to use Python to accomplish things, and this seemed like a fun exercise) to help me think about that. I'm never used object oriented programming before, and this seemed like a good opportunity to define class (which I called BuildNum) that would let you build a number out of other numbers (via the operations: add, subtract, multiply, divide -- I guess I left out exponentiation for now, which is needed for Sue's original puzzle). The class keeps track of:

Then I decided (I don't really know why!) to look for ways of combining fives to get a string of fives. So I wrote a function that takes a list of BuildNum objects and builds the next generation.

The example in the first line of this post is in generation 5 (where generation 1 consists of just "5"). There aren't any 555's in any generation below 5.

Some more notes:

So, what if we we're interested in everything you can make out of five 5's? What's the best approach? I'm thinking maybe you'd construct all of the trees with five leaves to define the parentheses/groupings, and then inserting all different combinations of operations between the branches.

Someone commented on Sue's posting asking about what we can do with students from this kind of stuff. I'm not a teacher, but my first thought is on growth rates: How many ways of forming a number do you have in generation n? (It would also be interesting to try to figure out -- without brute force, which is gets infeasible at some point -- how many unique numbers there are. But that sounds hard.)

Here's the code. I don't know if I'll spend much more time improving this particular code, or writing more related to this problem. Please understand that I'm just beginning to code in Python, so it's probably terrible! But I'll appreciate any advice you have for a beginner.

At her blog, Sue VanHattum mentions this puzzle: Use five 5's to make 37.

That got me thinking about making things out of fives (or some other number), so I wrote a Python script (I'm trying to learn how to use Python to accomplish things, and this seemed like a fun exercise) to help me think about that. I'm never used object oriented programming before, and this seemed like a good opportunity to define class (which I called BuildNum) that would let you build a number out of other numbers (via the operations: add, subtract, multiply, divide -- I guess I left out exponentiation for now, which is needed for Sue's original puzzle). The class keeps track of:

- the current value of the number

- a string representing how the number was formed

- the "generation" of the number, defined as the largest of the generations of the component numbers plus 1

- (e.g., "5" is generation 1, "5 + 5" is generation 2, "(5+5)/5" is generation 3)

- a list of the "seeds" (generation 1 numbers) used to make the current number

Here's some sample execution using this class:

Then I decided (I don't really know why!) to look for ways of combining fives to get a string of fives. So I wrote a function that takes a list of BuildNum objects and builds the next generation.

The example in the first line of this post is in generation 5 (where generation 1 consists of just "5"). There aren't any 555's in any generation below 5.

Some more notes:

- If generation 1 has just "5", then generation 4 (for example) has all ways of combining four 5's, but it also has a lot more than that. Generation 2 can have up to two 5's, generation 3 can have up to four 5's, and generation 4 can have up to eight 5's.

- But generation 4 doesn't have everything with up to eight 5's:

- This (with only seven 5's) is in generation 5, but not in generation four:

[((5+5)+5)*((5+5)+5)] + 5

So, what if we we're interested in everything you can make out of five 5's? What's the best approach? I'm thinking maybe you'd construct all of the trees with five leaves to define the parentheses/groupings, and then inserting all different combinations of operations between the branches.

Someone commented on Sue's posting asking about what we can do with students from this kind of stuff. I'm not a teacher, but my first thought is on growth rates: How many ways of forming a number do you have in generation n? (It would also be interesting to try to figure out -- without brute force, which is gets infeasible at some point -- how many unique numbers there are. But that sounds hard.)

Here's the code. I don't know if I'll spend much more time improving this particular code, or writing more related to this problem. Please understand that I'm just beginning to code in Python, so it's probably terrible! But I'll appreciate any advice you have for a beginner.

Monday, April 18, 2011

pre-generate pictures of your knitting

[Update: I've made a website for this: http://www.plannedpooling.com/.]

This was a birthday present for my spouse. (Don't worry--I also covered a lot of things -- fruit/nuts/cocoa puffs/etc -- in chocolate. But I think both were appreciated!)

Sometimes people knit with with yarn that has a repeating sequence of colors -- for example, purple, then yellow, then green -- in a more-or-less regular repeating pattern. Most frequently, the colors in the finished product combine without making much of a distinguishable pattern (somewhat like the picture in the lower right, below). But sometimes stitches of the same color group together (as in the upper right picture), and sometimes other patterns emerge.

Inspired by someone at www.ravelry.com who seems to have done the same thing in SAS, I've written some R code that tries to predict what sort of pattern will emerge in the finishes product based on the repeating pattern of colors in the yarn (how many stitches of each color?), the width (in stitches) of the square, and whether your knitting is "flat" (back and forth -- starts next row where last one finished) or "circular" (starts the next row where the last one started).

Here's the function call that generated these plots:

Pooling(

repeats = c(17,5,17,5),

colors = c("#AC00FF",rgb(.71,.5,.9),"green","gold"),

widths = c(46,52,62,82),

type = "flat",

ManyPerPage=TRUE,

rows=2,

cols=2

)

Here's the code that defines this function, which also has an example function call with much more commenting to explain each of the inputs.

I've added functions for use in RStudio that adds a slider controlling the width:

I hope knitters may find this useful, or others may find it fun to play with. (Especially people who might want to play with any number theory that might be involved here?)

If you're new to R, you just have to download R and run the code up through the function call above (well, really just the code defining the two functions used), and then play with it yourself.

Saturday, February 12, 2011

Installing R on Amazon Linux

When I wanted to run R (statistical software) on the Amazon "cloud", the first thing I found was this post explaining how to launch an Amazon EC2 "instance" (virtual machine) and pointing to an "image" (contains operating system and whatever else you want) that had Ubuntu and R.

Then I wanted 64-bit R, and it turned out that by far the easiest thing was to launch a Windows instance on Amazon EC2. I googled for an explanation, but it doesn't really need one. You launch a Windows instance, make sure the security group allows RDP, and connect with Remote Desktop. Works very smoothly. I could even connect with my phone.

But now I wanted to run R on a virtual machine which I could (for free) leave running all the time. For this I needed to run Amazon Linux on a "micro" instance, which ruled out both of the above. I launched a micro instance running Amazon Linux, and ssh'd into it.

Okay, now how do I install R?

I'll outline the process I went through. But I also went through a lot more confusion/frustration than I'll describe!

Then I wanted 64-bit R, and it turned out that by far the easiest thing was to launch a Windows instance on Amazon EC2. I googled for an explanation, but it doesn't really need one. You launch a Windows instance, make sure the security group allows RDP, and connect with Remote Desktop. Works very smoothly. I could even connect with my phone.

But now I wanted to run R on a virtual machine which I could (for free) leave running all the time. For this I needed to run Amazon Linux on a "micro" instance, which ruled out both of the above. I launched a micro instance running Amazon Linux, and ssh'd into it.

Okay, now how do I install R?

I'll outline the process I went through. But I also went through a lot more confusion/frustration than I'll describe!

- Can I use an automatic installer? Apt-get and yum seem to work for various people on the internet. But not for me! Oh well.

- How do I install R? Where are the files I need to download to install R?

- Googled, found installation instructions and a file.

- How do I get a file? Googled, found wget.

wget http://cran.at.r-project.org/src/base/R-2/R-2.12.1.tar.gz

- How do I unzip this? Googled, found:

tar xf R-2.12.1.tar.gz

cd R-2.12.1

./configure

- There's an error message! Google the error message to learn I need a C compiler. I had learned earlier that yum is for installing things, and googling reveals that gcc is a C compiler:

sudo yum install gcc

./configure

- Another error message! Google the message to find that I need a C++ compiler. Maybe something like gcc will help...

yum search gcc

- Yep! I now see gcc-c++, so I'll install that:

sudo yum install gcc-c++

./configure

- Another error message! I need a fortran compiler. In the search above, I saw gcc-gfortran, so:

sudo yum install gcc-gfortran

./configure

- Another error message! Google the message, revealing that I need readline-devel. I still don't know what that is, but this works:

sudo yum install readline-devel

./configure

- Another error message! Google shows me a post from someone who seems a bit confused, but less confused than I am. Following the advice in that post (which seems like it will get me an incomplete R build, maybe with something related to windowing missing--but I won't be using windows anyway):

./configure --with-x=no- It works!

make

PATH=$PATH:~/R-2.12.1/bin/

R

- Yippeee! I have R now. You can follow these steps, or I think you can use the public AMI I've created, AmazonLinuxWithR_c.

Saturday, January 29, 2011

risk-aversion in charitable giving

My friend Jeff wrote that you shouldn't try to avoid risk in charitable giving, I wrote with some thoughts about why it's maybe conceivable that you should diversify charitable giving, and he replied. His reply outlines some reasons that you might think you should diversify, and addresses all of them.

Jeff's reasons that you might think you should diversify probably make more sense that what I had in mind, but I want to write some about what I did have in mind, since it's a little different. (I don't think I explained my thinking very well in that note!)

Jeff's reasons that you might think you should diversify probably make more sense that what I had in mind, but I want to write some about what I did have in mind, since it's a little different. (I don't think I explained my thinking very well in that note!)

Monday, January 10, 2011

Saturday, January 1, 2011

Animation from Kaggle traffic prediction competition

Below: two animations (same one twice, just as different speeds) I made from the highway data for the Kaggle traffic prediction competition.

The data are travel times for 61 different sections of Sydney's M4 freeway, in both directions. The data comes in 3-minute intervals and goes from March 2010 to November 2010, but my video goes through only one week of it in 27-minute intervals to minimize file size.

In my display, I've normalized by dividing each section by its average travel time. There is a red line in the middle dividing the data for the two directions--so each point is really for the same location as its reflection.

There's a bunch that I don't really know about this data (how are travel times measured?), but making it was fun anyway. I intended to participate in the prediction competition, but I don't think I really will.

With the code I used, you could create a video at a different time scale, or showing more of the time covered.)

I'm very new to R (and to having a blog, for that matter)--so I'd appreciate any advice that anyone has for me about this stuff.

Thanks to:

Animation isn't working for some? Try these link1, link2. Need flash, either way.

If anyone has advice for me about what the problem is, I'd appreciate that. Maybe it's related to the fact that I'm trying to embed these in a post at Blogger?]

The data are travel times for 61 different sections of Sydney's M4 freeway, in both directions. The data comes in 3-minute intervals and goes from March 2010 to November 2010, but my video goes through only one week of it in 27-minute intervals to minimize file size.

In my display, I've normalized by dividing each section by its average travel time. There is a red line in the middle dividing the data for the two directions--so each point is really for the same location as its reflection.

There's a bunch that I don't really know about this data (how are travel times measured?), but making it was fun anyway. I intended to participate in the prediction competition, but I don't think I really will.

With the code I used, you could create a video at a different time scale, or showing more of the time covered.)

I'm very new to R (and to having a blog, for that matter)--so I'd appreciate any advice that anyone has for me about this stuff.

Thanks to:

- Kaggle

- NSW Roads and Traffic Authorit

- David Smith, at Revolution Analytics for a post showing how to create flash animations in R

- Somene on the internet for a a page showing how to include the flash animation in a webpage

Animation isn't working for some? Try these link1, link2. Need flash, either way.

If anyone has advice for me about what the problem is, I'd appreciate that. Maybe it's related to the fact that I'm trying to embed these in a post at Blogger?]

Subscribe to:

Posts (Atom)